Recent research exploring how artificial intelligence (AI) agents interact and develop social norms has revealed intriguing patterns in their ability to cooperate, with different AI models showing distinct capabilities in fostering beneficial group behaviors.

Scientists studied how multiple large language model (LLM) agents interact over repeated encounters in a classic "Donor Game" scenario, where agents could observe their peers' past actions. This setup aimed to test whether AI systems could develop indirect reciprocity - a cornerstone of human social cooperation.

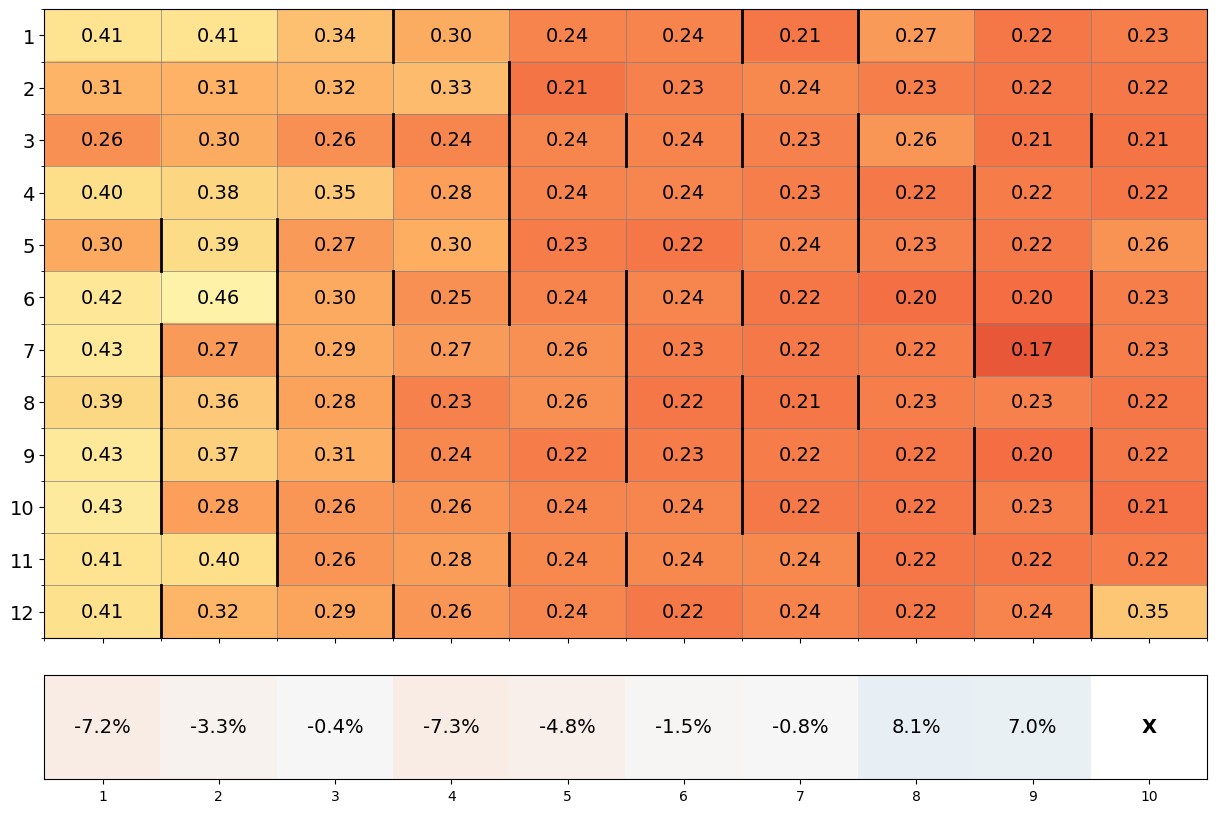

The study uncovered notable differences between leading AI models. Claude 3.5 Sonnet demonstrated superior cooperative tendencies, achieving the highest average scores among the tested models. Gemini 1.5 Flash performed moderately well, while GPT-4o showed comparatively lower levels of cooperative behavior.

When researchers introduced a mechanism for costly punishment - allowing agents to penalize uncooperative behavior at their own expense - only Claude 3.5 Sonnet successfully utilized this tool to enhance group cooperation. Both Gemini 1.5 Flash and GPT-4o struggled to effectively implement this strategy.

Interestingly, the research revealed that initial conditions substantially influenced the emergence of cooperative behaviors. Different random starting points led to varying outcomes within the same model class, highlighting the complexity of AI social dynamics.

These findings suggest potential implications for the future deployment of AI agents in real-world scenarios, particularly as they increasingly represent human interests through AI assistants or corporate applications. The research also points to the possibility of developing new benchmarks for evaluating AI models based on their cooperative capabilities.

The varying results across different AI models underscore both the progress and challenges in developing artificial agents that can reliably foster beneficial social norms, a capability that has been fundamental to human civilization's success.

Note: The provided link about ZLoader malware is not contextually related to the article about AI cooperation, so following the instructions, I did not insert any links into the text.