DeepMind has announced that its latest artificial intelligence system, AlphaGeometry2, has achieved a breakthrough in mathematical problem-solving by outperforming International Mathematical Olympiad (IMO) gold medalists in geometry problems.

The AI system successfully solved 84% of geometry problems from IMO competitions over the past 25 years, marking a substantial improvement from its predecessor's 54% success rate. When tested on a benchmark set of 50 formalized IMO geometry problems, AlphaGeometry2 solved 42 problems, exceeding the average gold medalist score of 40.9.

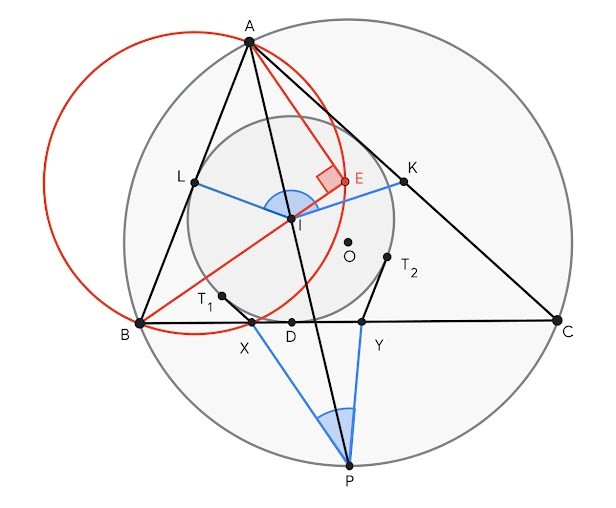

The system combines two key components: a language model based on Google's Gemini AI and a specialized "symbolic engine." The Gemini model suggests potential problem-solving steps in mathematical language, while the symbolic engine verifies these suggestions using logical rules and principles.

To overcome the scarcity of training data, DeepMind generated over 300 million synthetic theorems and proofs to train the system. This innovative approach helped AlphaGeometry2 develop advanced problem-solving capabilities.

However, the system faces certain limitations. It cannot handle problems involving variable numbers of points, nonlinear equations, or inequalities. When tested on a more challenging set of 29 problems nominated for IMO but not yet used in competition, AlphaGeometry2 solved only 20.

The achievement has sparked discussions about the future of AI in mathematics. "I imagine it won't be long before computers are getting full marks on the IMO," says Kevin Buzzard, a mathematician at Imperial College London.

This development represents a notable milestone in AI's mathematical capabilities and suggests potential applications in other areas of mathematics and science, including complex engineering calculations.

DeepMind's success with AlphaGeometry2 demonstrates the benefits of combining neural networks with traditional rule-based systems, potentially paving the way for more advanced AI problem-solving tools in the future.