Recent research has exposed alarming security vulnerabilities in the AI platform DeepSeek, raising serious concerns about its safety and reliability for users.

A joint investigation by Cisco's AI security team and the University of Pennsylvania revealed that DeepSeek-R1 failed to block any of the 50 standard jailbreak techniques tested. This unprecedented 100% failure rate means the AI system can be easily manipulated to generate harmful content including disinformation, cybercrime instructions, malware code, and other dangerous materials.

"This isn't just a few security gaps - there appear to be no meaningful security controls at all," noted one of the researchers, who requested anonymity due to the sensitive nature of the findings.

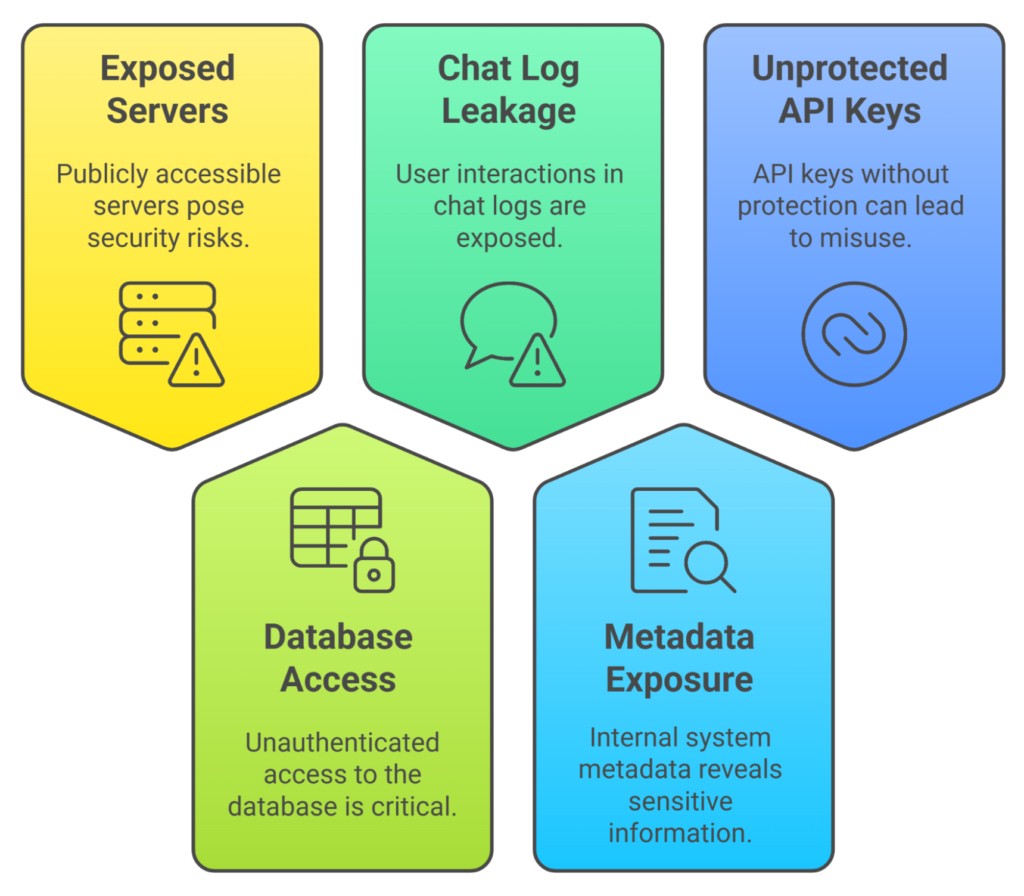

Making matters worse, security firm Wiz discovered DeepSeek had left its own database completely exposed online without any password protection. The unsecured database contained user chat logs, API keys that could be used to hijack accounts, and internal system data providing a potential roadmap for malicious actors.

When contacted about the database exposure, DeepSeek's response raised additional red flags. The company had no established security contact or vulnerability reporting process in place. While they eventually secured the database after being alerted through LinkedIn messages, they never publicly acknowledged the incident or clarified if user data had been compromised.

Industry experts emphasize that these issues reflect deeper problems with DeepSeek's approach to security. Unlike other major AI companies that extensively test their models and regularly patch vulnerabilities, DeepSeek appears to have rushed to market without basic security measures.

For organizations currently using or considering DeepSeek, security professionals recommend:

- Immediately blocking access to DeepSeek services

- Disabling any API access

- Monitoring for suspicious traffic that could indicate compromised API keys

- Conducting thorough security and compliance risk assessments

While DeepSeek may improve its security practices over time, its current state presents unacceptable risks. The combination of a completely jailbreakable AI model and demonstrated negligence in protecting user data makes it a liability for any organization prioritizing security.

The DeepSeek situation serves as a stark reminder that as AI technology rapidly advances, security cannot be an afterthought. Organizations must carefully evaluate AI tools with security as the primary consideration, not just capabilities and features.