A groundbreaking open-source simulation system called "Genesis" is revolutionizing how robots learn, compressing decades of training into mere hours through accelerated virtual environments.

Unveiled by a collaborative team of university and industry researchers, Genesis can run robot training simulations 430,000 times faster than real-world practice. This means one hour of computer time provides a robot with the equivalent of 10 years of training experience.

The system, developed under the leadership of Zhou Xian at Carnegie Mellon University, outperforms existing robot simulators by processing physics calculations up to 80 times faster. Using standard graphics cards, Genesis can simultaneously run 100,000 copies of a simulation, dramatically accelerating the neural network training process for robots.

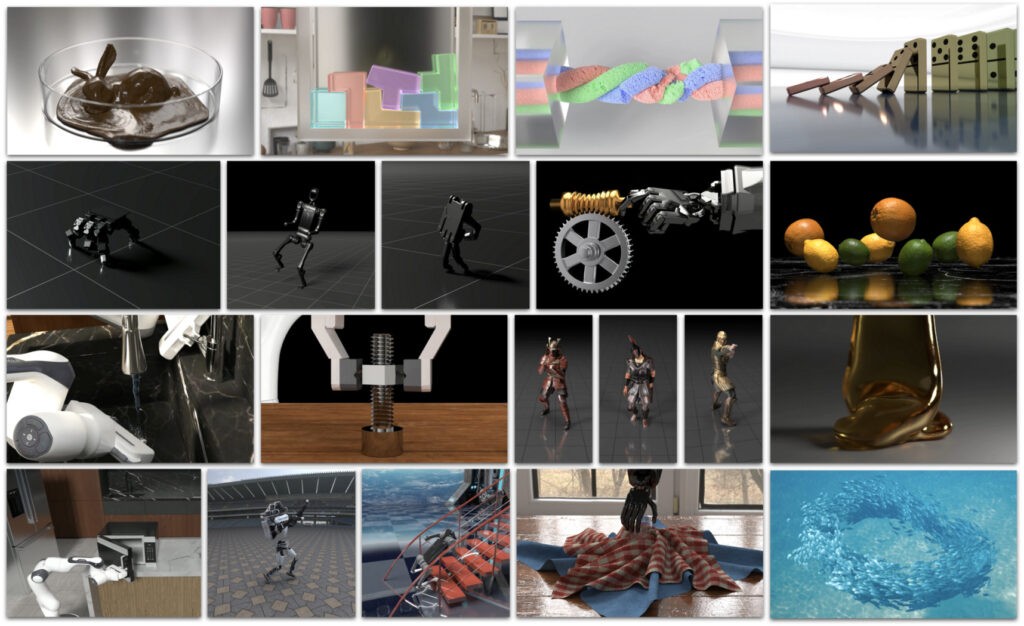

A standout feature of Genesis is its ability to generate "4D dynamic worlds" from simple text descriptions. Using vision-language models, researchers can create complete virtual training environments through natural language commands rather than complex programming. These AI-generated worlds include realistic physics, camera movements, and object behaviors.

The platform's capabilities extend beyond basic robotics training. Genesis can generate character motion, interactive 3D scenes, and facial animations, potentially revolutionizing the creation of AI-generated games and videos.

What sets Genesis apart is its Python-first approach, making it accessible to researchers worldwide using regular computers with standard hardware. This democratization of high-speed robot training breaks down previous barriers that required specialized equipment and complex programming knowledge.

While the generative features are still in development, the core simulation platform is available on GitHub as an open-source project, welcoming community contributions. This open approach reflects the team's vision of making advanced robotics development accessible to all researchers.

The system has already demonstrated practical applications, successfully teaching robots complex movements like backflips, which have been successfully transferred to both quadruped and soft robots in real-world testing.